More or Less

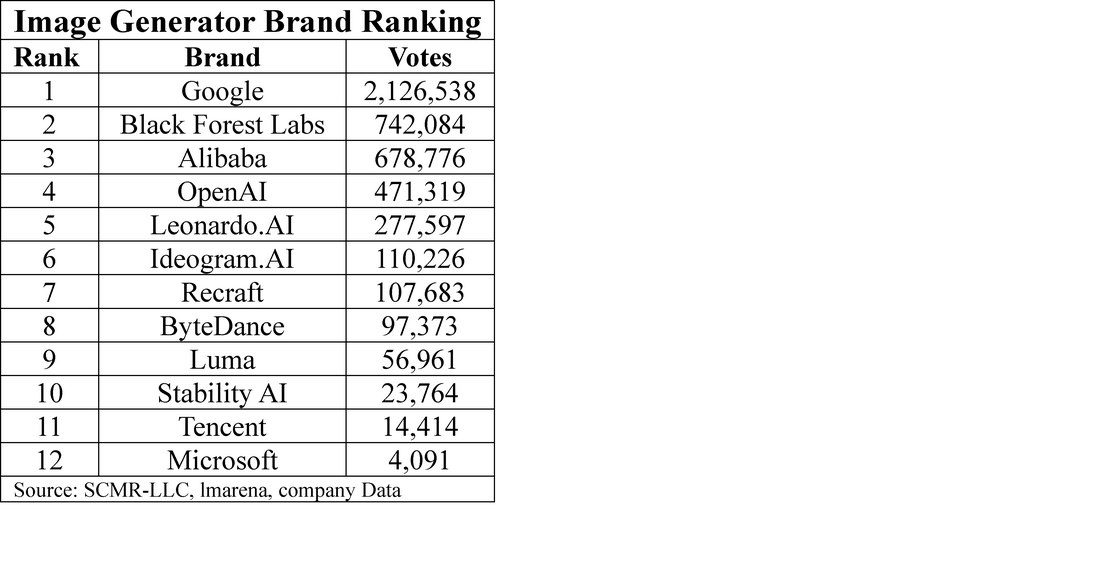

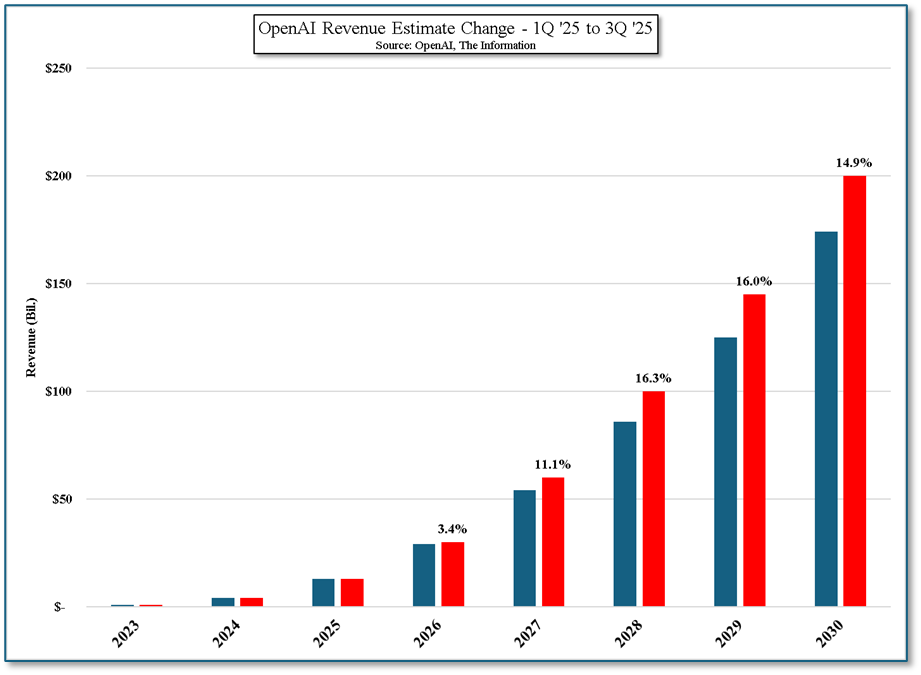

While spending on data centers continues to grow and new deal announcements are almost a daily event, our concern is rooted in the sales and profitability of AI vendors as AI passes through the ‘First Date’ phase into the “Now that I’ve met your parents” phase in 2027/2028. Expectations are extremely high for vendors like OpenAI (pvt) and Anthropic (pvt), and while current spending will likely provide the capacity necessary to meet aggressive future sales targets, our concern, coming from the CE space, is will there be enough customers at a reasonable rate to justify those expectations and data processing costs.

We are very price conscious and track product pricing and unit volumes for a number of key CE products, but the AI space is far more esoteric, at least at this stage, which makes it easier for companies to develop lofty expectations and corresponding valuations. According to them everyone from Multi-nationals to small businesses will be using AI and paying for it, and MAU growth will continue almost forever. However that is never the case, so we focus our attention on AI pricing as we expect the competitive nature of the AI business to become a link to growth of the AI space.

Right now AI is new and model performance is improving. While AI ROI for corporations is still a bit of a mystery, corporate efficiency improvements are forefront and CEOs are willing to spend whatever is necessary to show their participation in such gains. But what if someone comes up with a model that is almost as good but much cheaper? Do CEOs look to save money or will they need to be showing their investors that they are using the best models possible? Should they opt for older, less expensive models and if MAU growth slows for AI vendors, will the monthly fee and API rate changes shine a light on those infinite growth projections? None of those circumstances would be out of character relative to the path taken for the myriad of CE products that have been developed and hyped over the last 20 or 30 years, so we are always on the lookout for indications that competition is increasing or the profitability situation is changing.

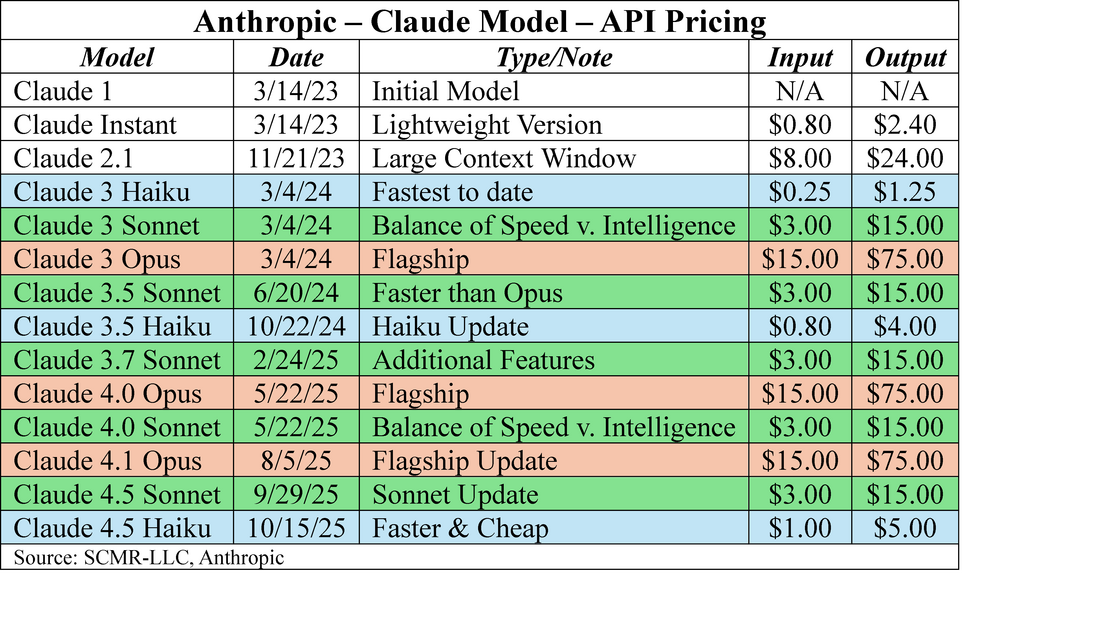

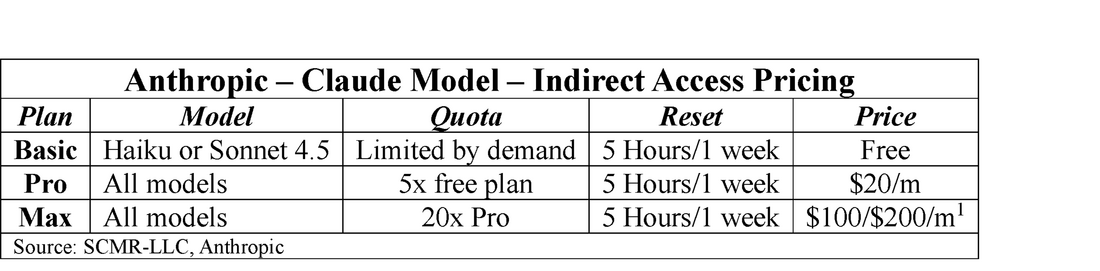

Anthropic is one of the top AI vendors, with their Claude models. They offer two pricing strategies, the first of which is based on using the models through the company’s API, a way for both small businesses and enterprises to access the models. Small businesses would use the API to integrate with internal applications or websites without having to build complex systems, and to manage usage costs on a pay-as-you-go basis, with the ability to scale as the company grows. Enterprise customers use the API to integrate high-volume applications and operations across a variety of departments, typically requiring greater control and SLAs (Service Level Agreements).

Anthropic has three basic models. Opus is the company’s flagship model, the most sophisticated of the three and typically used for tasks that require advanced reasoning, complex math, or coding. Sonnet is a more balanced model offering a lighter, faster and more cost-efficient model, while Haiku is the most cost-conscious model. That said with each new iteration Sonnet and Haiku can outperform Opus and each other in terms of features like speed and reasoning capabilities, until the next update. As the updates get closer together the competition between a brand’s models increases, just as it does with outside models, yet prices do not go down.

The problem is that most, if not all Ai vendors, give relatively little information about sales and even less about profitability, typically focusing on MAUs (Monthly Active Users) to show growth. MAUs are a vanity metric that has no bearing on sales as the number of free users is typically quite high. At the same time those free users are costing the developer money as those queries are processed at the input/output rate. So, on a general basis, paying users (either API or subscription) finance the cost of free users. Subscription users are hit or miss, as low volume subscription users are highly profitable while high-volume subscription users are highly unprofitable (although lees unprofitable than free users). This means that the API users, who pay on a per token basis, finance all of those MAUs (subscription or free) that are unprofitable.

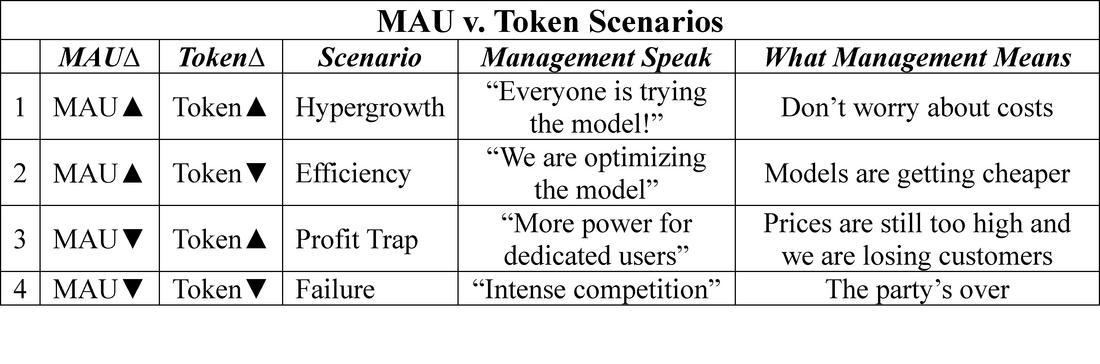

MAU metrics can be helpful on a general basis, but not if they stand alone. If one has MAU growth metrics and token growth metrics the following four basic scenarios can be discerned:

[1] We note that tokens can be words, sub-words, or even individual letters. The Claude tokenizer produces 33% more tokens than a word count would generate.

“Claude Haiku 4.5, our latest small model, is available today to all users. What was recently at the frontier is now cheaper and faster. Five months ago, Claude Sonnet 4 was a state-of-the-art model. Today, Claude Haiku 4.5 gives you similar levels of coding performance but at one-third the cost and more than twice the speed.” “Claude Haiku 4.5 gives users a new option for when they want near-frontier performance with much greater cost-efficiency. “

There are metrics behind that statement. In terms of agentic software engineering (coding) the accuracy of Haiku 4.5 is 73.3% while the accuracy of the earlier (two weeks ago) Sonnet 4.5 model was 77.2%, an accuracy difference of less than 4% for 1/3 of the price of Sonnet 4.5, along with being faster (unconfirmed) than Sonnet (We believe Opus is still faster).

This Anthropic release (Haiku 4.5), although it mimics previous Sonnet/Haiku release pairs, is the second time Anthropic has raised the API price of Haiku, and that makes us wonder whether API customers are unable to cover the cost of subscription and free plan customers, essentially moving from scenario 1 to scenario 2. It is impossible to know whether this is the case given the private status of Anthropic, but if nothing else it serves as a bit of cautionary data that might help to understand how investments in companies on the periphery of AI might play out over time. There are lots of good things about AI and each market bubble over the last 50 years has led to improvements in technology or lifestyle, so we are not calling the end of the Ai cycle, only looking for signs that change might be afoot. Sometimes indicators can be flukes and the bubble continues to expand and other times the handwriting is on the wall for all to see. The point is to always to be looking.

RSS Feed

RSS Feed