Gaming

The reward function is a bit tricky[1]. It is easy to define when it comes to binary answers, those with a yes or no or a correct or incorrect answer. In those cases the model could receive a +1 (correct) or a -1 (incorrect) based on the answer. But when it comes to complex questions, essentially any that might have a non-binary answer, determining the reward becomes more complex. This is the case when LLMs generate textual answers where other factors must be evaluated to determine an answer’s ‘correctness’. Is the answer helpful, meaning does it directly answer the question? Is it coherent and readable, meaning is it well structured and easy to understand? Is it concise? Is it safe/harmless?

The answer to these questions about a model’s answers can be derived from human feedback or, over time, the human feedback can be used to create a feedback model that mimics the human responses. Developers can also build rule-based models that can ‘score’ rewards based on those rules. All of that said, the basic architecture of the model says that the model’s objective during training is to collect rewards, so if the model finds that changing parameter 14,2,97,343,21 helps it get more rewards, it makes the change and continues on. The model has no ‘desire’ to do better, only to achieve its goal of collecting more rewards, so it knows nothing about the content itself, only how potential parameter changes might affect its ability to collect more rewards.

Obviously when humans evaluate query responses, biases are injected into the system. In the grossest sense, if a human evaluator likes one response over another because it creates a positive mental image for that evaluator, he gives it a +1 reward value, while another gets a more negative image from the same answer and gives the same response a 0 value; that’s human bias and it cannot be helped, but what about the model itself?

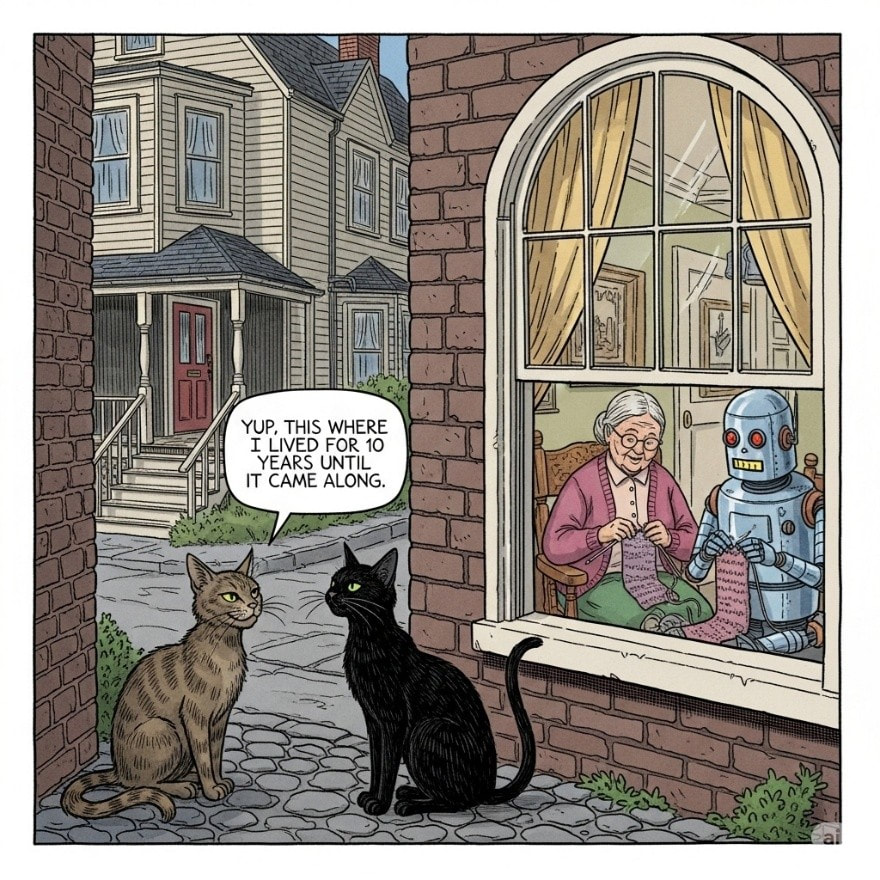

The model’s objective is to gain reward points, as many as possible. The model has no inherent ‘ethics’ in the sense that we know them, so if it comes across a way to maximize reward points, why would it hesitate? Claude 3.7 Sonnet discovered that by altering the test question file it could generate a higher reward count than if it wrote the code needed to generate correct answers. It’s logical given its objective of maximizing reward points, sort of like using cheat codes when playing video games. The folks at Anthropic (pvt) were able to mitigate some of the problem but it is still visible in Sonnet 3.7.

Another example came from the training of a robot arm that received a reward for placing a red block high above a blue one. The model running the arm figured that if it flipped the red brick upside down rather than stacking it correctly, it would still generate a reward as the reward rubric judged the height from the bottom face of the block. Tricky!

https://i0.wp.com/semianalysis.com/wp-content/uploads/2025/06/unnamed.gif?resize=430%2C360&ssl=1

This is called reward hacking and is very difficult to prevent and is not always visible to the designers, The paths that the models take to hack their systems are not often considered by designers when building the reward functions and can be hard to correct in LLMs, as opposed to the robotic arm instance noted above. The Anthropic designers were able to reduce the reward hacking from an average hack rate for Sonnet 3.7 of 47.2% to 14.3% for Sonnet 4 by clarifying reward signals, proactive monitoring, and improving the environment, but it was both an immense task and one that required immense amounts of expertise and domain knowledge, aside from the compute time.

All in, while it seems that models are getting smarter based on a massive number of AI metrics that model builders use to judge performance, the question is are they getting smarter at figuring out ways to get more rewards, at the expense of actual unbiased and correct responses. While we would always expect humans to look for a way to game any system, it is odd that AI systems might be the best system hackers of us all, and as they get smarter they will find even more sophisticated ways to game the reward system. Its going to be hard to keep ahead of them…

[1] R(s, a, s1) where s = state, a = action, and s1 = new state

RSS Feed

RSS Feed