The Boss is Back: Nvidia’s AI Chips Return to China Market

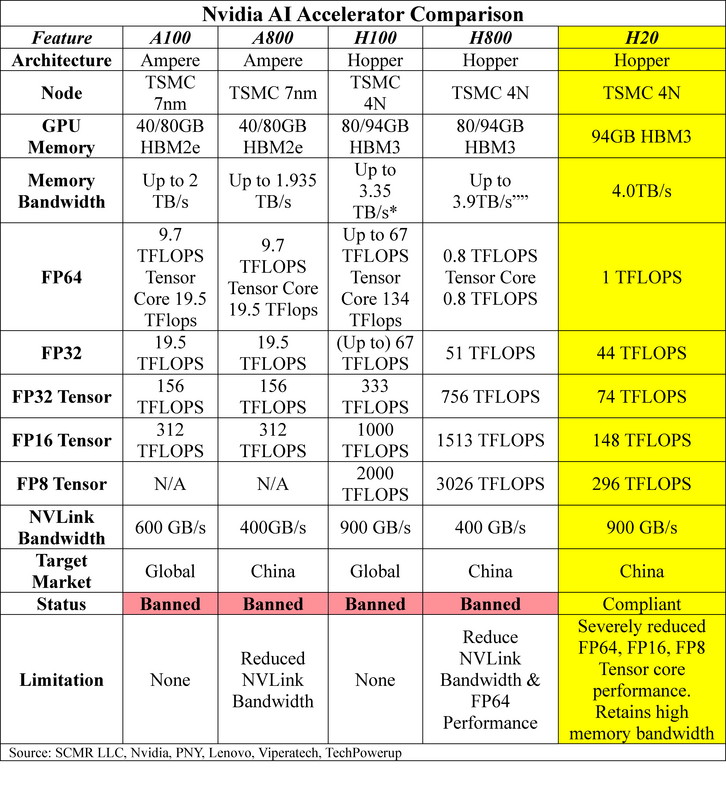

To understand the Nvidia H20 processor's capabilities compared to the currently banned AI chips, here's a detailed comparison table. More importantly, we break down what these technical metrics mean for AI and high-performance computing

- Architecture – Newer is typically better for GPU performance. Blackwell architecture is Nvidia’s latest innovation but is currently banned for export to China. A ‘de-featured’ Blackwell GPU for China is expected from Nvidia. That product should fall below government-mandated AI chip performance limits. Hopper architecture was announced in 2022, while Ampere architecture was announced in 2020.

- Node – A lower designation typically indicates a more advanced manufacturing process. TSMC’s N7[1] process was released in 2018 and uses DUV (Deep Ultraviolet) lithography.. It generated a 35% - 40% improvement in speed or 65% lower power consumption vs. 16nm process node. TSMC’s N4 process was released in 2022 and uses EUV (Extreme Ultraviolet Lithography). Versus the N5, the N4 showed an 11% performance boost, 22% higher power efficiency, and 6% higher transistor density.

- GPU Memory – Higher memory capacity and speed are crucial for AI workloads. HBM (High Bandwidth Memory) was released in late 2019 and supports 8 DRAM stacked dies. It runs (up to) a 3.6Gbps per pin data rate, with each stack having a 460GB/s bandwidth. The HBM3 (2022) supports a 12 stack architecture. It has (up to) a 6.4Gbps per pin data rate, with the latest HBM3e extending that to 9.6Gbps, so a nearly double data rate from the previous version.

- Memory Bandwidth – More is better for data intensive tasks.

- FPxx – These represent data types (Floating Point) and various precision levels. FP64 (Double Precision) is the highest precision level, while FP16 (Half Precision) is less precise but consumes much less memory and compute resources, making it the choice for deep learning tasks. For all, higher TFLOPS (Tera Floating Point Operations per Second) means higher performancer but tradeoffs are sometimes necessary to manage costs. We note that both Nvidia GPUs feature CUDA (General Purpose) cores and Tensor (Multi-matrix) cores and are available in these processors. We show performance for both.

- NVLink – This is Nvidia’s proprietary high-speed interconnect that is used between GPUs. It provides a higher bandwidth and lower latency that PCle. It also allows Nvidia GPUs to directly access each other’s memory allowing it to be ‘pooled’ rather than individually managed.

- Target Market – The initial market the GPU was designed for.

- Current Ex/Im status – The current export/import status under US regulations.

- Limitations – Some details on how formerly compliant devices met rules.

[1] Note that the Nx or Xnm designations do not always correspond to actual sizes. N4 is actually part of the N5 ‘family’.

RSS Feed

RSS Feed