Wanted: VP of AI Procurement

Given the rapid changes seen in model pricing we dig deeper into the AI landscape in order to understand how model pricing will match model costs, both initial and ongoing, and what other costs are associated with model infrastructure and TCO, especially in light of the performance metrics that are used to justify model pricing by developers. As organizations adopt AI, they cannot afford to allow AI to become a ‘Black Box’ without real cost justification and those that incorporate AI into consumer products need to understand its real cost and benefits. It is a complex and rapidly changing picture where the usual technological advantage over Chinese companies does not exist, which makes for a more level playing field when it comes to model competition, but the commercial aspects of AI are so new that there is no clear company, technology, or regional winner, making it a truly open playing field.

In order to understand how the AI pricing model is evolving, we started by looking at common AI pricing models, with the predominant pricing model for generative AI being token-based pricing. In this pricing format costs are calculated on a unit of text (token) basis, roughly corresponding to the number of characters. For both input (query or data) and output (response) the costs are figured in million token units and allow AI application developers and users to pay only for what they actually use. However, when it comes to AI services, such as image generation, per-unit or per-request pricing is most common. The well-known DALL-E image generation model charges $0.02 for a standard resolution image, while others offer a small number of free images and then a per image fee. Speech-to-text services (transcription) typically charge a per minute rate, although volume can bring down that cost.

Subscription based pricing is more common for team oriented development and can be a management favorite as it allows for consistent budgeting, while the newest pricing model, pay-by-task, where a monthly fee is charged for projects like fixing a bug or adding a feature, something with measurable results, as a form of incentive oriented pricing.

Regardless of the pricing mechanism used, models have to justify their pricing, and we see a large gap between performance metrics and real-world performance, especially as LLMs are quite different from machine learning applications where answers are quite clear. LLM responses can generate a number of ‘correct or acceptable’ answers to a common query, making evaluation a more subjective process. Some core performance metrics, primarily those obtained through human ‘raters’ reflect the values and preferences of those raters whether they are aware of those biases or not . They act as judges, deciding whether answers are factually correct and how well they relate to the real world. They have to evaluate coherence and fluency, whether the output is safe, and how ‘creative’ the model is when answering, all of which are qualitative and subjective evaluations.

There are also quantitative metrics used to develop pricing models, based on algorithms that compare output to a reference text or some other form of reference. These can be complex formula that measure a wide variety of model capabilities. Here are samples:

- Perplexity – How well an LLM predicts text when presented with new data. Higher scores indicate less ‘surprise’ when a model encounters new data.

- Embedding-Based Semantic Similarity (BERTS) – This metric evaluates the semantic similarity between generated text and reference text. Thought to capture the deeper meaning of text rather than just relying on string matching.

- Generative Quality Metrics (MAUVE) – These metrics evaluate how closely the model output resembles human language distribution.

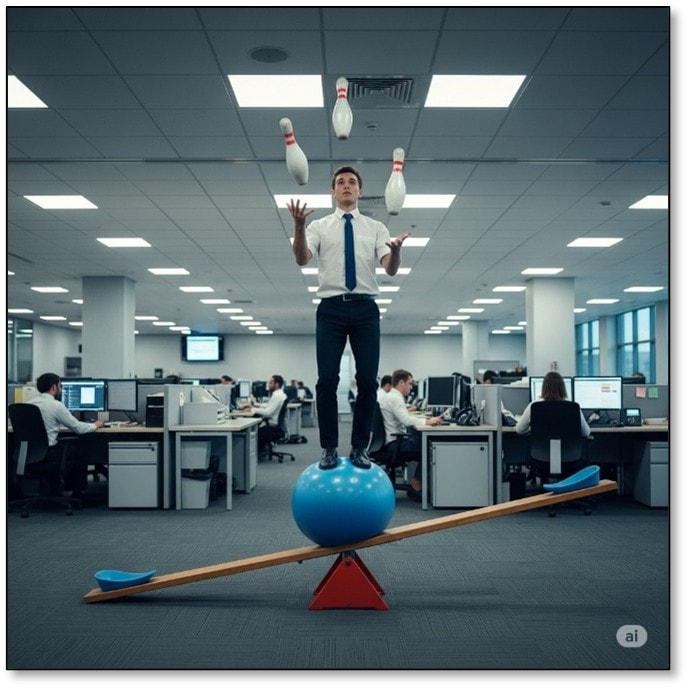

While we can go deeper into pricing models for individual AI applications or the rationale behind the various pricing models themselves, the real truth to pricing models is simple, “What will the market pay?” If the metrics were identical for every model, pricing would be simple, but developers are quick to point out that ‘their’ model is a bit better than another and should carry a higher price, usually backed up with carefully chosen or even specifically designed metrics that reflect their optimism. However given the subjective nature of AI metrics, trying to evaluate pricing from such a perspective is like trying to juggle bowling pins while balancing on a ball that is sitting on a seesaw. But for once the pricing paradigm is moving in the right direction for the consumer/business and the influence of competition seems to be having a greater impact on AI pricing than any other factor in the AI space.

From the perspective of the developer, price reductions are a gift to consumers brought about by technological improvements and efficiency, while behind the florid prose of those generous developers, they still have one eye on what the competition is doing. If it seems necessary to discount in order to attract or maintain customers, model developers look as if they are willing to adjust their pricing, especially at a time when model company valuation has little to do with profitability and more to do with momentum. From the consumer side, businesses are still trying to figure out where AI sits between being a cost center and a profit center, but for now it is almost a prerequisite that almost any business has some sort of AI development project underway, and with prices coming down precipitously, the pressure is off, at least for a while.

However that price deflation also comes at a cost, as while commodity AI prices are declining, specialized AI services are getting more expensive, creating a more complex picture for AI buyers. Is it cheaper to build than buy? Is it cheaper to Host or outsource? Do I want to build an AI development team or buy packaged products? Google recently included Gemini AI into Google Workspace, which led to a price increase for the entire suite, while Gemini Flash 8B, released only 2 months after Gemini Flash 1.5 saw an input price drop from $0.075 to $0.0375 and an output price drop from $0.30 to $0.15. This means that static pricing plans are likely a thing of the past and will change with every new model, perhaps leading to the new position of ‘VP of AI Procurement’. But in all seriousness, Ai has moved from being an ‘on the box’ logo to being a necessary component of operational R&D, and a complex path for businesses who have had little experience with both the technology and AI cost structure, and little experience with building AI applications.

[1] Standard metric for assessing image quality that measures statistical distribution of generated images against a set of real images.

[2] This measures the semantic similarity between an image and its corresponding text prompt.

[3] Measures how well the generated image accurately reflects details, objects, spatial relationships, and atributes that were defined in the text prompt.

RSS Feed

RSS Feed