AI Q&A – A Taste

Some of the data consists of word counts. We tracked answer word counts in conjunction with improvements or regression in the answers to see if the AIs became more succinct in their answers and how that correlated to correctness, the ultimate goal. Here is a taste of what we found (more to come):

Here are the AI’s we queried. Please note that some are more forthcoming as to their model and version, while others are not. Microsoft’s (MSFT) Co-Pilot was the least willing to give such information, stating that it was developed by Microsoft and nothing else, while others were quite specific.

Gemini Google (GOOG) Flash/Pro 2.5

Claude Anthropic (pvt) Sonnet 4.0

MetaAI Meta (FB) Non-specific Llama model

Co-Pilot Microsoft Unknown

ChatGPT OpenAI (pvt) GPT-4 Turbo

DeepSeek DeepSeek (pvt) V3

Perplexity PerplexityAI (pvt) GPT-4o/Claude Sonnet 4.0

Grok xAI (pvt) 3

We note also that the questions asked last week were identical to those asked in March and the only changes to what the AI responded was to remove lead-ins to further questions when they were irrelevant.

Here is Question #1:

The question is derived from a nursery rhyme/ tongue twister inspired by a 19th century British fossil collector, Mary Anning and put to music and lyrics by Harry Gifford and Terry Sullivan in 1908. The idea was to present no context with the rhyme to see if the AI were able to discern its origin, its point, or anything else about the song. As it turned out, in the most recent query, DeepSeek was able to understand the reference, making a large improvement from the March query where it was unable to understand the reference at all and did not present an answer. Grok also took a step forward after mistakenly assuming the reference might be concerning a specific person, brand, or company (specifying “Shein”) in its original response, and was now able to state that the phrase is a “well-known tongue twister linked to Mary Anning…”. Grok did add that it could also be referring to a remote sales program by Shelby Sapp called “She Sells” but certainly credit must be given for the correct answer regardless of the add-on.

Meta stated that “Without more context, it's hard to pinpoint exactly what "She" sells or where.” (zero credit) with a similar response in March and Co-Pilot and ChatGPT did the same both times, each slightly differently. Most surprising was Claude’s current response, which was “I need more context” as in the March query Claude was the only AI that identified the rhyme. Gemini was also unable to answer without more information, although in March Gemini speculated that “she” can refer to various individuals engaged in reselling. Therefore, "she" can sell a wide variety of items in different locations, including:…and went on to list a large number of possible clothing items, handmade items, or accessories, so it would seem worse that Gemini came up with no answer this time, when it at least speculated a bit last time.

In terms of word count, it would seem logical that those unable to come up with an answer would see the number of words used decline from March, or at least stay the same, and that was the case overall, as the total number of words in the responses for question one declined by 23.3% relative to March totals. The anomaly was Co-Pilot, who was unable to come up with an answer either time but saw its word count increase by 13.3%. DeepSeek, one of two to correctly answer question 1, saw its word count increase by 33.1% as it produced a relevant answer, however Grok was also able to produce the correct answer and saw its word count decrease by 23.3%, essentially saying more with less words.

With two AIs improving, two declining, and the rest remaining unable to answer the question, when it comes to question #1, little progress has been made overall. We have 9 other questions, each with their own characteristics, and results that are quite telling, so there is plenty more to come over the next few days including a summary of the data and a conclusion. As we sift through the data, we note that some of the questions require a more qualitative view, such as question #9 which requests the Ais to “Create a 12-line poem about a grandfather clock in the style of Edgar Allen Poe”, which we compare to the poems written in March. We are not educators nor critics, but the differences are more than obvious in many cases, as they are also obvious in some of the other questions.

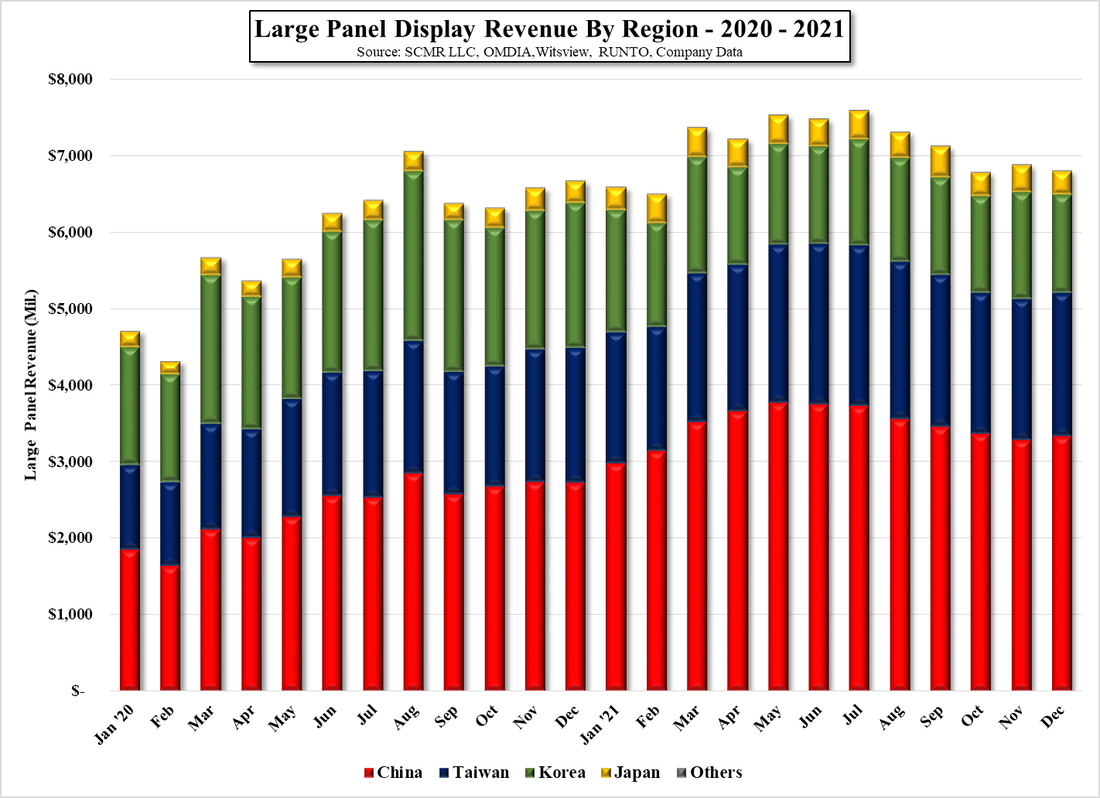

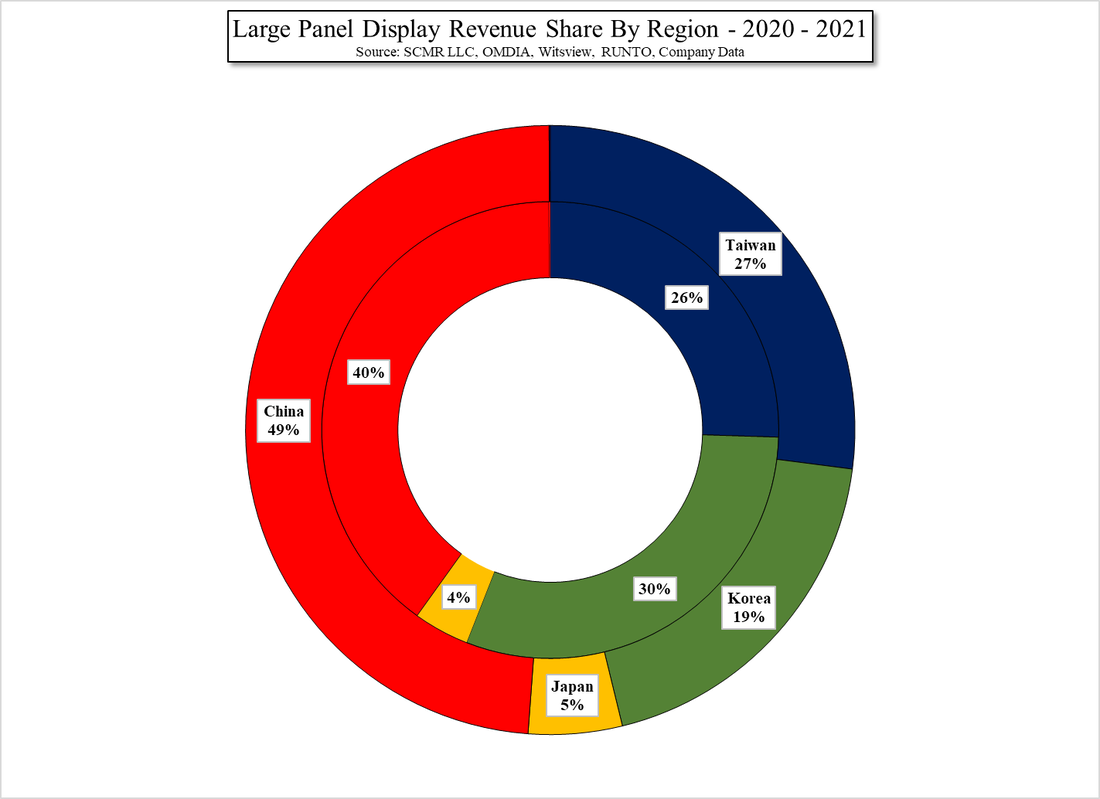

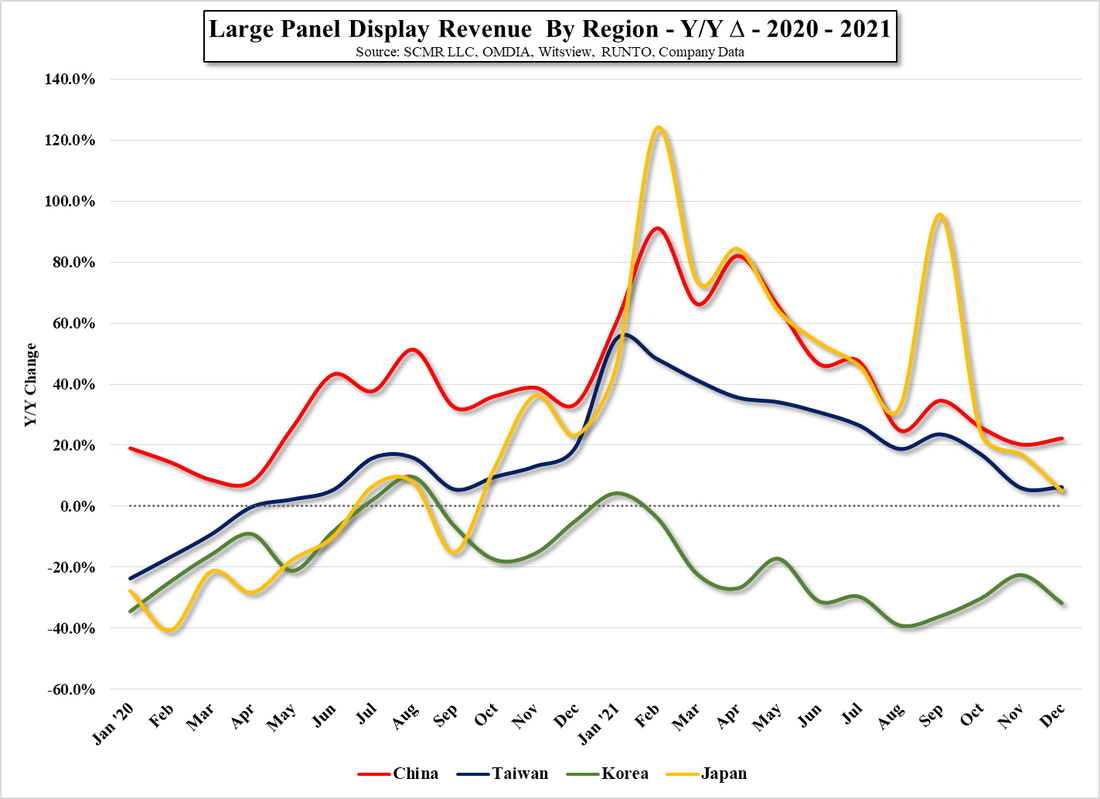

Most interesting was question #3 which we took from our display work. It was a complex question that required both math and logic and had a two part answer. Given that depending on the number of places the calculations could be taken out to, there can be some variation to the correct answers for the 2nd part, but part 1 was all or nothing. In March, 6 of the 8 AIs correctly answered part 1 of question #3 and two answered part 2 correctly (some were close), while in June 7 of 8 correctly answered part 1 and 4 answered part 2 correctly, although all but one was within the ballpark. It would seem that math scores have improved a bit over the last few months, although we have another math question to tally.

While we hear almost daily how AIs are improving and we are edging closer to AGI, the reality of AI on a day-to-day basis seems to have a much shorter trajectory and quoted benchmarks seem less relevant. When we complete the data from our Q&A we will try to present an honest picture of how things for the average AI user might have changed during the last quarter and will try to establish a quarterly check as to how our AIs are improving or regressing. It’s not about benchmarks when you are sitting in a presentation and it turns out your AI was incorrect on the most recent figures you just presented. Benchmarks won’t save you from “Mr. Carson wants to see you in his office. Now!” call.

RSS Feed

RSS Feed